What Is Incrementality Testing? How to Know If Your Marketing Is Actually Working

What Is Incrementality?

Incrementality is the lift your campaign generates on top of organic behavior.

For example: you send a push notification to 100 people. The test group receives it. The control group doesn’t. Twenty people in the test group purchase. Ten in the control group purchase without ever seeing the message.

Of those 20 purchases in the test group, ten would have happened regardless. The other 10 are your incremental conversions (a.k.a the purchases your campaign actually caused). Everything else is baseline behavior driven by things like organic interest, brand loyalty, and seasonal demand.

What Is Incrementality Testing?

Attribution shows you who converted. Incrementality testing shows you whether your campaign is the reason why.

Incrementality tests can be run at different levels depending on your objective:

- User-level tests measure how individuals respond to specific campaigns or channels

- Geographic (GEO) tests measure how entire markets respond, showing both online and offline impact in the same test

When to Use Incrementality Testing

Incrementality testing is most useful when you need to know whether your marketing is causing conversions, not just correlating with them. It works as part of a broader measurement approach. Marketing Mix Modeling helps set budget at a high level by identifying which channels are contributing to growth over time. Incrementality testing validates that impact at the channel or campaign level by isolating what’s actually driving lift. Conversion rate optimization then improves performance within those channels by refining creative, messaging, and user experience.

Together, they all answer different parts of the same question: where to invest, what’s working, and how to improve performance.

Why Incrementality Matters More Now

Most attribution models depend on user-level data to assign credit for conversions. They rely on being able to track how individuals move across devices, platforms, and sessions.

That visibility has become increasingly limited. Privacy changes, platform restrictions, and signal loss mean large portions of the user journey are no longer captured. As a result, attribution models are working with an incomplete view of performance.

Platform-reported ROAS adds another layer of complexity. Because platforms measure their own performance, they often over-attribute conversions, taking credit for outcomes that would have happened regardless.

Incrementality testing addresses both issues. Instead of relying on user-level tracking or platform-reported data, it measures the difference in behavior between exposed and unexposed groups. The result is a clearer view of what your marketing is actually driving.

How to Run an Incrementality Test

Getting actionable results from an incrementality test starts long before the test goes live. The methodology you choose, how you build your groups, and how long you run the test all determine whether the data is something you can confidently act on.

1. Define Your Objectives and KPIs

Tie your primary KPI to a business outcome like incremental revenue, cost per incremental acquisition, or incremental ROAS.

Add a secondary KPI to give you a fuller picture of campaign impact. For example, pairing incremental revenue with new customer acquisition can help clarify whether growth is coming from new or existing demand.

2. Choose the Right Testing Method

Not every campaign is structured the same way, and the right testing method depends on what you’re measuring, what data you have access to, and how much control you have over who sees your marketing.

A/B Lift Tests

Split your audience into a test group and a holdout group within a single platform. Most major ad platforms offer this natively.

- Best for: Quick directional insight into channel-level lift

- Things to consider: Because these tests are confined to a single platform, they don’t reflect how that channel performs within your broader media mix. Results also depend on how the platform assigns users to test and control groups, which isn’t fully transparent, making it harder to validate the accuracy of the lift.

Holdout Tests

Intentionally withhold ads from a segment of your audience to establish a clear picture of what conversions look like without your marketing influencing them.

- Best For: CRM, retargeting, email, and loyalty campaigns where you control who receives communication

- Things to Consider: Your audience needs to be large enough to split into test and control groups while still producing enough conversions to measure lift. If volume is too low, the data will be difficult to interpret.

You also need to ensure the holdout group isn’t exposed to your marketing through other channels. If they are, the data becomes less reliable.

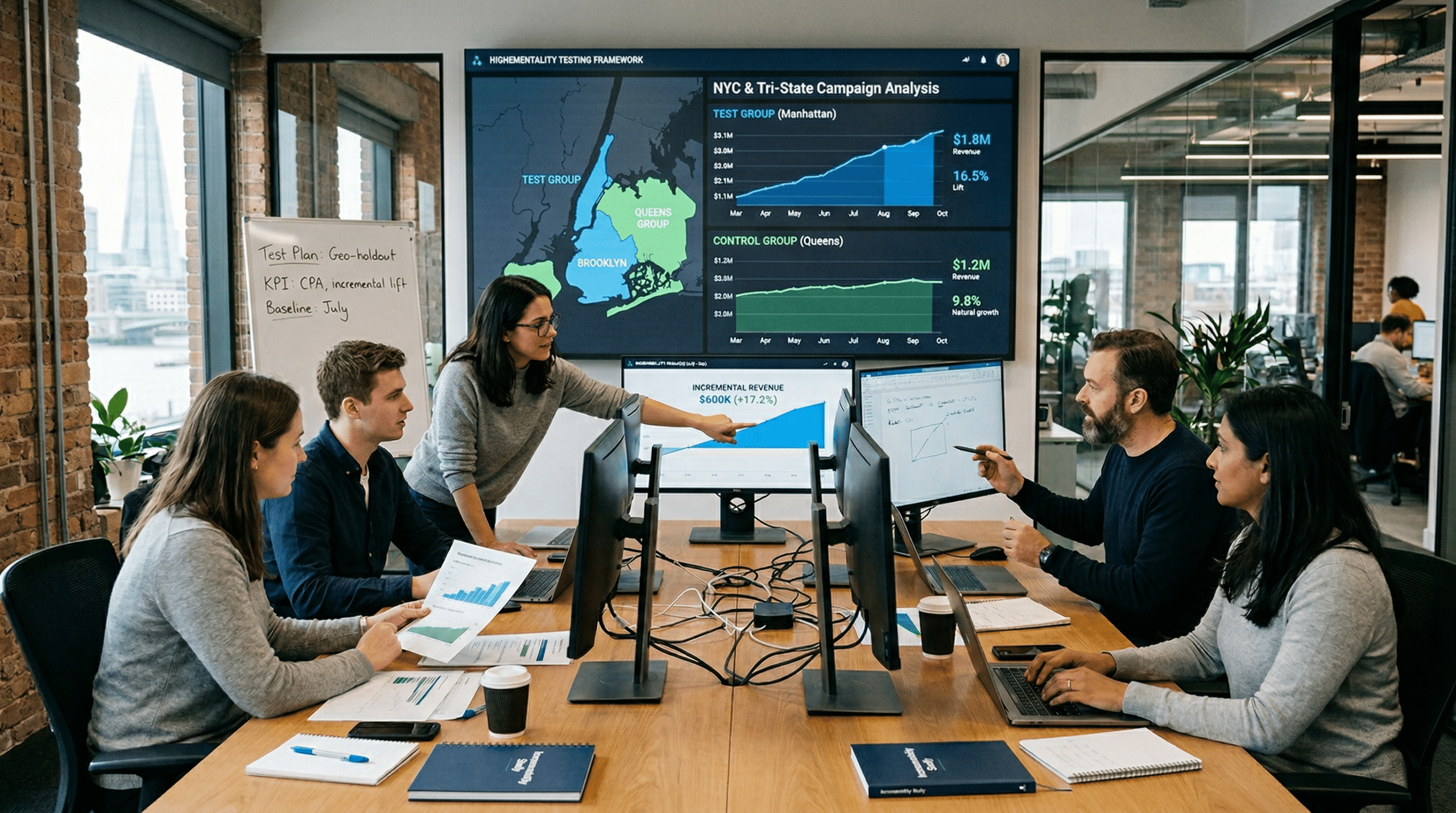

GEO Tests

Divide geographic regions into test and control groups.

- Best For: Measuring multi-channel impact

- Things to Consider: Markets need to be closely matched across factors like population, historical performance, and competitive environment. Timing also matters since seasonality can influence results.

3. Build Comparable Test and Control Groups

This can make or break your test. Groups must be truly comparable, not just similar on the surface.

For GEO tests, match regions on population size, historical performance, local economic conditions, and competitive presence. For holdout tests, ensure audiences are large enough and clearly separated to avoid cross-exposure.

Any meaningful difference between groups will show up in your results and make the lift harder to interpret.

4. Run the Test

Running a test for less than four weeks rarely produces data you can trust. Give purchasing behavior enough time to stabilize before drawing conclusions.

5. Analyze and Act on Lift

Once the test finishes, compare outcomes between test and control groups to calculate incremental conversions, revenue, and efficiency metrics. Before making any decisions, confirm the lift is statistically significant. Then, use those finding to guide how budget is allocated, where spend is scaled, and which channels are over- or under-contributing to growth.

What Gets in the Way of a Clean Test

Even small issues in how a test is set up or run can affect your data. These are the most common ones to watch for:

- Small sample sizes: User-level tests need a large enough audience to produce statistically significant data. If your audience size or budget is limited, GEO testing is often more reliable.

- Seasonality: Tests that overlap with promotions or seasonal spikes are harder to interpret. When possible, run tests during more stable periods or account for seasonality in your analysis.

- Audience spillover: If control groups are exposed to marketing through other channels, the test is no longer clean. GEO testing reduces this risk by isolating exposure at the market level.

- Short test durations: Incrementality tests typically need 4-8 weeks to produce statistically significant results. Shorter timeframes make it difficult to separate true lift from normal variation in purchasing behavior.

- Short-term performance tradeoffs: Holding back spend can feel counterproductive since it can impact short-term performance. But without a control group, it’s not possible to measure true incremental impact.

The Case for Incrementality

As signal loss increases and platform-reported data becomes less reliable, understanding incremental impact is necessary for making informed investment decisions. Brands that measure incrementality can more confidently scale what’s working, reduce spend in overcredited channels, and allocate budget more efficiently.

WITHIN helps consumer brands design and analyze incrementality tests, from test structure and market selection to lift analysis and budget implications, so you can understand what your campaigns are actually driving.

Get In Touch